- #Gnu octave matrix operations install

- #Gnu octave matrix operations full

- #Gnu octave matrix operations code

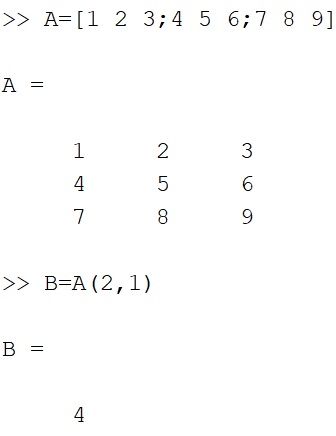

It is not easy and takes some programming skill to get octave to compile from source with ATLAS. If octave can use these instead of the default blas, and lapack libraries, then it will utilize multi core. The three libraries I am using which speed things up are With Atlas: Elapsed time is 0.529 seconds. The matrix multiplication goes much faster using multiple processors, which was 3 times faster than before with single core: Without Atlas: Elapsed time is 3.22819 seconds. I ran the following octave core before and after ATLAS install: tic I was unable to get this to work for Fedora, but on Gentoo I could get it to work.

#Gnu octave matrix operations install

When you install octave from source and specify ATLAS, it uses it, so when octave does a heavy operation like a huge matrix multiplication, ATLAS decides how many cpu's to use. ATLAS tunes itself to your system and number of cores.

#Gnu octave matrix operations code

First compile 'ATLAS' from source code and make it available to your system so that octave can find it and use those library functions. So while Octave only uses one core, when you encounter a heavy operation, octave calls functions in ATLAS that utilize many CPU's. You can get octave to use some libraries like ATLAS which utilize multiple cores. This may be what Matlab does.Octave itself is a single-thread application that runs on one core. For Sparse Matrix OPERATION Scalar NaN, the code would be easy to modify to check for NaN and then returnįor Matrix OPERATION Matrix operations it becomes a bit annoying because one would have to check for isnan() on every element.

#Gnu octave matrix operations full

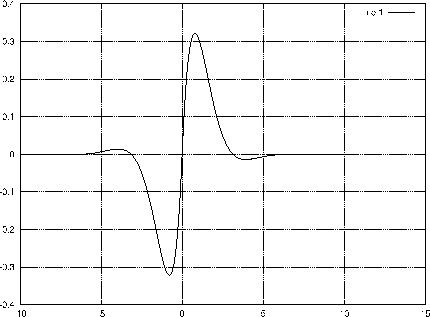

Make it a full matrix, and so the example,įor addition and subtraction, the sparse matrices are already converted to full and the NaN is added/subtracted and the operation follows IEEE guidelines. For example adding a scalar constant to a sparse matrix will almost always Operators and functions that have a high probability of returning a full matrix will always Makes sense to store it as a sparse matrix, but rather as a full matrix. Therefore, there is a certain density of nonzero elements of a matrix where it no longer Time on a sparse matrix operator or function is roughly linear with the number of nonzero The two are closely related in that the computation The two basic reasons to use sparse matrices are to reduce the memory usage and to not have From the documentation,Ģ2.1.4.2 Return Types of Operators and Functions In general, even without sparse_auto_mutate set to true, Octave will convert to full for operations that are likely to result in a full matrix. In fact, the situation will be worse than a full matrix because there will be the extra overhead of the sparse matrix implementation. After one of the operations involving NaN, every single element will become NaN and require storage. Mon 05:49:15 PM UTC, comment #6: The memory problem already exists. The "intersection" function for sparse matrices in C++ could be changed to include elements where only one of the matrices had a NaN. Z = X(elements_to_operate_on) op Y(elements_to_operate_on) For sparse matrices, I believe the code is equivalent toĮlements_to_operate_on = intersection (nonzero1, nonzero2) Of course, all of that would need to be in C++.Īnother choice would be to look carefully at the code which performs the operation 'op'. Pseudo-code which might work for Z = X op Y: The subsequent decision, about whether to convert to full if it would save space, should probably be left up to Octave, or to the sparse_auto_mutate code.

At a minimum, Octave needs to check whether each operand contains a NaN and it needs to specifically guarantee that those locations result in NaN at the output. At least we understand what behavior needs to be implemented.